25 Great Quotes for Software Testers

25 Great Quotes for Software Testers

All the quotes below are from the inside cover of Statistics for Experimenters written by George Box, Stuart Hunter, and William G. Hunter (my late father). The Design of Experiments methods expressed in the book (namely, the science of finding out as much information as possible in as few experiments as possible), were the inspiration behind our software test case generating tool. In paging through the book again today, I found it striking (but not surprising) how many of these quotes are directly relevant to efficient and effective software testing (and efficient and effective test case design strategies in particular):

-

"Discovering the unexpected is more important than confirming the known." - George Box

-

"All models are wrong; some models are useful." - George Box

-

"Don't fall in love with a model."

-

How, with a minimum of effort, can you discover what does what to what? Which factors do what to which responses?

-

"Anyone who has never made a mistake has never tried anything new." - Albert Einstein

-

"Seek computer programs that allow you to do the thinking."

-

"A computer should make both calculations and graphs. Both sorts of output should be studied; each will contribute to understanding." - F. J. Anscombe

-

"The best time to plan an experiment is after you've done it." - R. A. Fisher

-

"Sometimes the only thing you can do with a poorly designed experiment is to try to find out what it died of." - R. A. Fisher

-

The experimenter who believes that only one factor at a time should be varied, is amply provided for by using a factorial experiment.

-

Only in exceptional circumstances do you need or should you attempt to answer all the questions with one experiment.

-

"The business of life is to endeavor to find out what you don't know from what you do; that's what I called 'guessing what was on the other side of the hill.'" - Duke of Wellington

-

"To find out what happens when you change something, it is necessary to change it."

-

"An engineer who does not know experimental design is not an engineer." - Comment made by to one of the authors by an executive of the Toyota Motor Company

-

"Among those factors to be considered there will usually be the vital few and the trivial many." - J. M. Juran

-

"The most exciting phrase to hear in science, the one that heralds discoveries, is not 'Eureka!' but 'Now that's funny...'" - Isaac Asimov

-

"Not everything that can be counted counts and not everything that counts can be counted." - Albert Einstein

-

"You can see a lot by just looking." - Yogi Berra

-

"Few things are less common than common sense."

-

"Criteria must be reconsidered at every stage of an investigation."

-

"With sequential assembly, designs can be built up so that the complexity of the design matches that of the problem."

-

"A factorial design makes every observation do double (multiple) duty." - Jack Couden

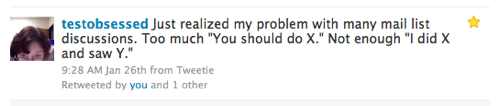

Where the quotes are not attributed, I'm assuming the quote is from one of the authors. The most well known of the quotes not attributed, above, "All models are wrong; some models are useful." is widely attributed to George Box in particular, which is accurate. Although I forgot to confirm that suspicion with him when I saw him over Christmas break, I suspect most of them are from George (as opposed to from Stu or my dad); George is 90 now and still off-the-charts smart, funny, and is probably the best story teller I've met in my life. If he were younger and on Twitter, he'd be one of those guys who churned out highly retweetable chestnuts again and again. [Update - George Box died in 2013]

Related thoughts

As you know if you've read my blog before, I am a strong proponent of using the Design of Experiments principles laid out in this book and applying them in field of software testing to improve the efficiency and effectiveness of software test case design (e.g., by using pairwise software testing, orthogonal array software testing, and/or combinatorial software testing techniques). In fact, I decided to create my company's test case generating tool, called Hexawise, after using Design of Experiments-based test design methods during my time at Accenture in a couple dozen projects and measuring dramatic improvements in tester productivity (as well as dramatic reductions in the amount of time it took to identify and document test cases). We saw these improvements in every single pilot project when we used these methods to identify tests.

My goal, in continuing to improve our Hexawise test case generating tool, is to help make the efficiency-enhancing Design of Experiments methods embodied in the book, accessible to "regular" software testers, and more more broadly adopted throughout the software testing field. Some days, it feels like a shame that the approaches from the Design of Experiments field (extremely well-known and broadly used in manufacturing industries across the globe, in research and development labs of all kinds, in product development projects in chemicals, pharmaceuticals, and a wide variety of other fields), have not made much of an inroad into software testing. The irony is, it is hard to think of a field in which it is easier, quicker, or immediately obvious to prove that dramatic benefits result from adopting Design of Experiments methods than software testing. All it takes is for a testing team to decide to do a simple proof of concept pilot. It could be for as little as a half-day's testing activity for one tester. Create a set of pairwise tests with Hexawise or another t00l like James Bach's AllPairs tool. Have one tester execute the tests suggested by the test case generating tool. Have the other tester(s) test the same application in parallel. Measure four things:

-

How long did it take to create the pairwise / DoE-based test cases?

-

How many defects were found per hour by the tester(s) who executed the "business as usual" test cases?

-

How many defects were found per hour by the tester who executed the pairwise / DoE-based tests?

-

How many defects were identified overall by each plan's tests?

These four simple measurements will typically demonstrate dramatic improvements in:

-

Speed of test case identification and documentation

-

Efficiency in defects found per hour

As well as consistent improvements to:

- Overall thoroughness of testing.

A Suggestion: Experiment / Learn / Get the Data / Let the Efficiency and Effectiveness Findings Guide You

I would be thrilled if this blog post gave you the motivation to explore this testing approach and measure the results. Whether you've used similar-sounding techniques before or never heard of DoE-based software testing methods before, whether you're a software testing newbie or a grizzled veteran, I suspect the experience of running a structured proof of concept pilot (and seeing the dramatic benefits I'm confident you'll see) could be a watershed moment in your testing career. Try it! If you're interested in conducting a pilot, I'd be happy to help get you started and if you'd be willing to share the results of your pilot publicly, I'd be able to provide ongoing advice and test plan review. Send me an email or leave a comment.

To the grizzled and skeptical veterans, (and yes, Mr, Shrini Kulkarni / @shrinik who tweeted "@Hexawise With all due respect. I can't credit any technique the superpower of 2X defect finding capability. sumthng else must be goingon" before you actually conducted a proof of concept using Design of Experiments-based testing methods and analyzed your findings, I'm lookin' at you), I would (re)quote Sophocles: "One must try by doing the thing; for though you think you know it, you have no certainty until you try." For newer testers, eager to expand your testing knowledge (and perhaps gain an enormous amount of credibility by taking the initiative, while you're at it), I'd (re)quote Cole Porter: "Experiment and you'll see!"

I'd welcome your comments and questions. If you're feeling, "Sounds too good to be true, but heck, I can secure a tester for half a day to run some of these DoE-based / pairwise tests and gather some data to see whether or not it leads to a step-change improvement in efficiency and effectiveness of our testing" and you're wondering how you'd get started, I'd be happy to help you out and do so at no cost to you. All I'd ask is that you share your findings with the world (e.g., in your blog or let me use your data as the firms did with their findings in the "Combinatorial Software Testing" article below).

Related:

-

(Introductory Hexawise video overview showing 6.5 trillion possible tests reduced, using Design of Experiments techniques to the 37 tests most likely to find defects)

-

(Article explaining behind Design of Experiments-based software testing techniques such as pairwise, OA, and n-wise testing: Combinatorial Software Testing by Kuhn, Kacker, Lei, and Hunter (pdf download)

-

(Prior blog post) "In Praise of Data-Driven Management (AKA “Why You Should be Skeptical of HiPPO’s”)"

-

(My brother's blog: he's in IT too and is also a strong proponent of using Design of Experiments-based software test design methods to improve software testing efficiency and effectiveness).