How to Convince Your Organization to Adopt Pairwise Software Testing

How to Convince Your Organization to Adopt Pairwise Software Testing

Since creating Hexawise, I've worked with executives at companies around the world who have found themselves convinced in the value of pairwise testing. And then they need to convince their organization of the value.

They often follow the following path: first thinking "pairwise testing is a nice method in theory, but not applicable in our case" then "pairwise is nice in theory and might be applicable in our case" to "pairwise is applicable in our case" and finally "how do I convince my organization."

In this post I review my history helping convince organizations to try and then adopt pairwise, and combinatorial, software testing methods.

About 8 years ago, I was working at a large systems integration firm and was asked to help the firm differentiate its testing offerings from the testing services provided by other firms.

While I admittedly did not know much about software testing then but by happy coincidence, my father was a leading expert in the field of Design of Experiments. Design of Experiments is a field that has applicability in many areas (including agriculture, advertising, manufacturing, etc.) The purpose of Design of Experiments is to provide people with tools and approaches to help people learn as much actionable information as possible in as few tests as possible.

I Googled "Design of Experiments Software Testing." That search led me to Dr. Madhav Phadke (who, by coincidence, had been a former student of my father). More than 20 years ago now, Dr. Phadke and his colleagues at ATT Bell Labs had asked the question you're asking now. They did an experiment using pairwise test design / orthogonal array test design to identify a subset of tests for ATT's StarMail system. The results of that testing effort were extraordinarily successful and well-documented.

Shortly after doing that, while working at that systems integration firm, I began to advocate to anyone and everyone who would listen that designing approach to designing tests promised to be both (a) more thorough and (b) require (in most but not all cases) significantly fewer tests. Results from 17 straight projects confirmed that both of these statements were true. Consistently.

Repeatable Steps to Confirm Whether This Approach Delivers Efficiency and Thoroughness Improvement (and/or document a business case/ROI calculation)

How did we demonstrate that this test design approach led to both more thorough testing and much more efficient testing? We followed these steps:

-

Take an existing set of 30 - 100 existing tests that had already been created, reviewed, and approved for testing (but which had not yet been executed).

-

Using the test ideas included in those existing tests, design a set of pairwise tests (often approximately half as many tests as were in the original set). When putting your tests together, if there are particular, known, high-priority scenarios that stakeholders believe are important to test, it is important to make sure that that you "force" your pairwise test generator to include such high-priority scenarios.

-

Have two different testers execute both sets of tests at the same time (e.g., before developers start fixing any defects that are uncovered by testers executing either set of tests)

Document the following:

-

How long did it take to execute each set of tests?

-

How many unique defects were identified by each set of tests?

-

How long did it take to create and document each set of tests?*

*This third measurement was usually an estimate because a significant number of teams had not tracked the amount of time it took to create the original set of tests.

The results in 17 different pairwise testing "bake-off" projects conducted at my old firm included:

-

Defects found per tester hour during test execution: when pairwise tests were used, more than twice as many defects were found per tester hour

-

Total defects found: pairwise tests as many or more defects in every single project (despite the fact that in almost every case there were significantly more tests in the each original set of tests)

-

Defects found by pairwise tests but missed by traditional tests: a lot (I forget the exact number)

-

Defects found by traditional tests but missed by pairwise tests: zero

-

Amount of time to select and document tests: much less time required when a pairwise test generator was used (As mentioned above, precise measurements were difficult to gather here)

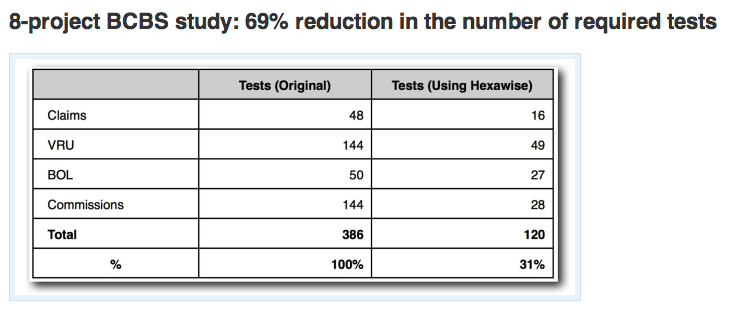

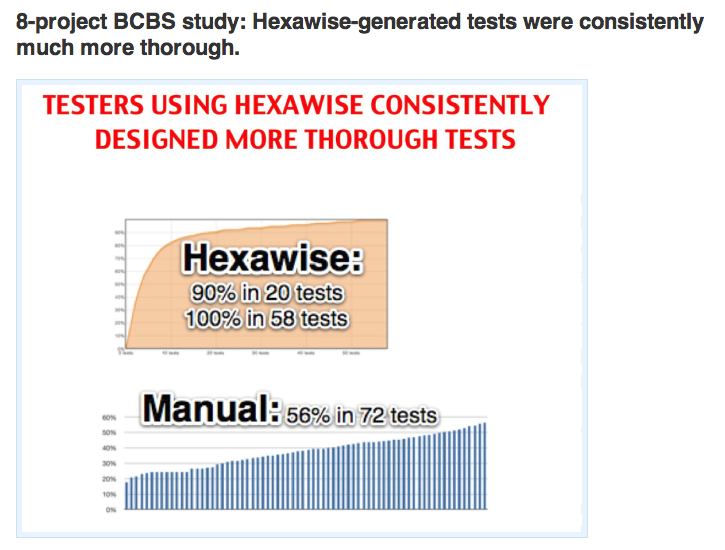

More recent project benefits have included these:

Those experiences - combined with the realization that many Fortune 500 firms were starting to try to implement smarter test design methods to achieve these kinds of benefits but were struggling to find a test design tool that was user-friendly and would integrate into their other tools - led me to the decision to create Hexawise.

Additional Advice and Lessons Learned Based on My Experiences

Once the testing the value of pairwise software testing at a specific organization it is very common to find the proponent of taking advantage of pairwise testing advantages to find themselves saying:

I have already elaborated some test plans that would save us up to 50% effort with that method. But now my boss and other colleagues are asking me for a proof that these pairwise test cases suffice to make sure our software is running well.

In that case, my advice is three-fold:

First, appreciate how your own thinking has evolved and understand that other people will need to follow a similar journey (and that others won't have as much time to devote as you have had to experience learnings first-hand).

When I was creating Hexawise, George Box, a Design of Experiments expert with decades of experience explaining to skeptical executives how Design of Experiments could deliver transformational improvements to their organizations' efficiency and effectiveness, told me "Justin, you'll experience three phases of acceptance and acceptance will happen more gradually than you would expect it to happen. First, people will tell you 'It won't work.' Next, they'll say "It won't work here." Eventually, he said with a smile, they'll tell you 'Of course this works. I thought of it first!'

When people hear that you can double their test execution productivity with a new approach, they won't initially believe you. They'll be skeptical. Most people you're explaining this approach to will start with the thought that "it is nice in theory but not applicable to what I'm doing." It will take some time and experience for people to understand and appreciate how dramatic the benefits are.

Second, people will instinctively be dismissive of pairwise testing case study after case study after case study that show how effective this approach has been for testers in virtually all types of industries and all types and phases of testing. George Box was right when he predicted that people will often respond with 'It won't work here.' Sometimes it is hard not to smile when people take that position.

Case in point: I will be talking to a senior executive at a large capital markets firm soon about how our tool can help them transform the efficiency and effectiveness of their testing group. And I can introduce them to a client of ours that is using our test design tool extensively in every single one of their most important IT projects. Will that executive take me up on my offer? I hope so, but based on past experience, I suspect odds are good that he'll instead react with 'Yes, yes, sure, if companies were people, that company would be our company's identical twin, but still... It won't work here.'

Third, at the end of the day, the most effective approach I have found to address that understandable skepticism and to secure organizational-level buy-in and commitment is through gathering hard, indisputable evidence on multiple projects that the approach works at the company itself through a bake-off approach (e.g., following those four steps outlined above. A few words of advice though.

My proposed approach isn't for the faint of heart. If you're working at a large company with established approaches, you'll need patience and persistence.

Even after you gather evidence that this approach works in Business Unit A, and B and C, someone from Business Unit D will be unconvinced with the compelling and irrefutable evidence you have gathered and tell you 'It won't work here. Business Unit D is unique.' The same objections may likely arise with results from "Type of Testing" A, B, and C. As powerful and widely-applicable as this test design approach is, always remember (and be clear with stakeholders) that it is not a magical silver bullet.

James Bach raises several valid limitations with using this approach. In particular, this approach won't work unless you have testers who have relatively strong analytical skills driving the test design process. Since pairwise test case generating tools are dependent upon thoughtful test designers to identify appropriate test inputs to vary, this approach (like all test design approaches) is subject to a "garbage in / garbage out" risk.

Project leads will resist "duplicating effort." But unless you do an actual bake-off stakeholders won't appreciate how broken their existing process is. There's inevitably far more wasteful repetition hidden away in standard tests than people realize. When you start reporting a doubling of tester productivity on several projects, smart managers will take notice and want to get involved. At that point - hopefully - your perseverance should be rewarded.

Some benefits data and case studies that you might find useful:

-

Does Pairwise Testing Really Work? Evidence, Data, and Case Studies

-

Utilizing Design of Experiments to Reduce IT Systems Testing Costs

If you can't change your company, consider changing companies

Lastly, remember that your new-found skills are in high demand whether or not they're valued at your current company. And know that, despite your best efforts and intentions, your efforts might not convince skeptics. Some people inevitably won't be willing to take the time to understand. If you find yourself in a situation where you want to use this test design approach (because you know that these approaches are powerful, practical, and widely-applicable) but that you don't have management buy-in, then consider whether or not it would be worth leaving your current employer to join a company that will let you use your new-found skills.

Most of our clients, for example, are actively looking for software test designers with well developed pairwise and combinatorial test design skills. And they're even willing to pay a salary premium for highly analytical test designers who are able to design sets of powerful tests. (We publicize such job openings in the LinkedIn Hexawise Guru group for testers who have achieved "Guru" level status in the self-paced computer-based-training modules in the tool).

Related: Looking at the Empirical Evidence for Using Pairwise and Combinatorial Software Testing - Systematic Approaches to Selection of Test Data - Getting Known Good Ideas Adopted