Under- and Over-Testing: Problems

How much testing is enough? The importance of answering this question accurately cannot be overstated. Consider what happens when it is answered incorrectly: if a team performs an insufficient number of tests, then they may allow defects to go undetected, resulting in bugs in production (which can be incredibly costly to address) and a sub-par user experience. Conversely, a team which over-tests their product will waste valuable development time and resources due to sharply diminishing returns.

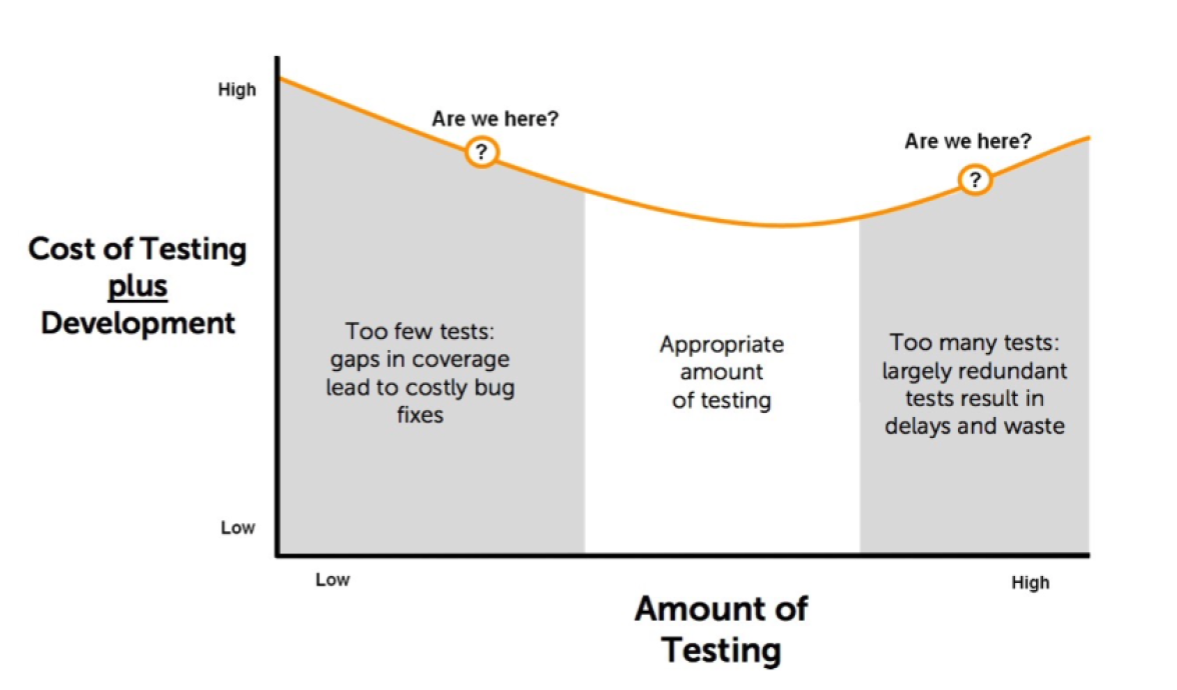

Both under-testing and over-testing drive up the overall cost of development for a given product. Developments costs are minimized somewhere between the two, as seen in this testing-cost curve:

However, positioning your team in the middle of this curve, in the valley of low development costs (a suitably picturesque name for something that can save your company money), is easier said than done. It may require an overhaul in how your team designs testing sets and measures their coverage. Before we discuss how to get there, let's look at some of the common mistakes which keep teams on the high-cost slopes on either side of the valley.

The biggest mistake is an obvious one, but difficult to address nonetheless: many teams lack the tools to measure their testing coverage, thus making it difficult to pinpoint the right level of testing. Without any way to determine whether a given testing set is too small to provide sufficient coverage, or conversely, whether a smaller set would suffice, teams are forced to resort to guesswork and trial and error. Using these methods on a large, complicated, or highly consequential product is obviously unsatisfactory, and won't reliably lead your team to the valley of low development costs.

Another common barrier to cost minimization? Inefficient test design. The usual culprit is manually-designed tests, which tend to be overly repetitive, constantly retreading the same parameters and covering only a fraction of user stories as a result. In order to compensate and achieve adequate coverage, teams are forced to perform a huge number of tests, placing them squarely to the right of the low cost valley on the testing-cost curve.

To conclude, we'll examine one of the most pernicious and damaging issues in software testing: ambiguous requirements. A result of unclear documentation, misunderstandings of the system under test, and miscommunication about testing priorities, ambiguous requirements can leave teams guessing over what to test and what not to test. Critical user stories that should have been clearly enumerated in the requirements may be left out of testing sets, resulting in under-testing. Trying to overcompensate for unclear requirements may also lead teams to perform an excessively large number of test cases, placing them on the over-testing portion of the testing-cost curve. Regardless of the specific outcome, ambiguous requirements can significantly impair your team’s ability to effectively and cheaply test your product.

If your team suffers from under- and over-testing, it is imperative to diagnose the underlying issues which prevent them from reaching the valley of low costs. Next week, we’ll release an article which provides solutions to these issues, allowing your team to hike down into the valley and bask in the golden rays of cheap testing.