Under- and Over-Testing: Solutions

Last week, we went in depth about over-testing and under-testing, two common testing mistakes that can drive up the cost and hinder the efficiency of any software development project. In this sequel article, we’ll discuss how to solve this problem, saving any team time, effort, and a considerable amount of money. In order to effectively address the greater issues of under- and over-testing, accurate diagnosis of the root causes is necessary.

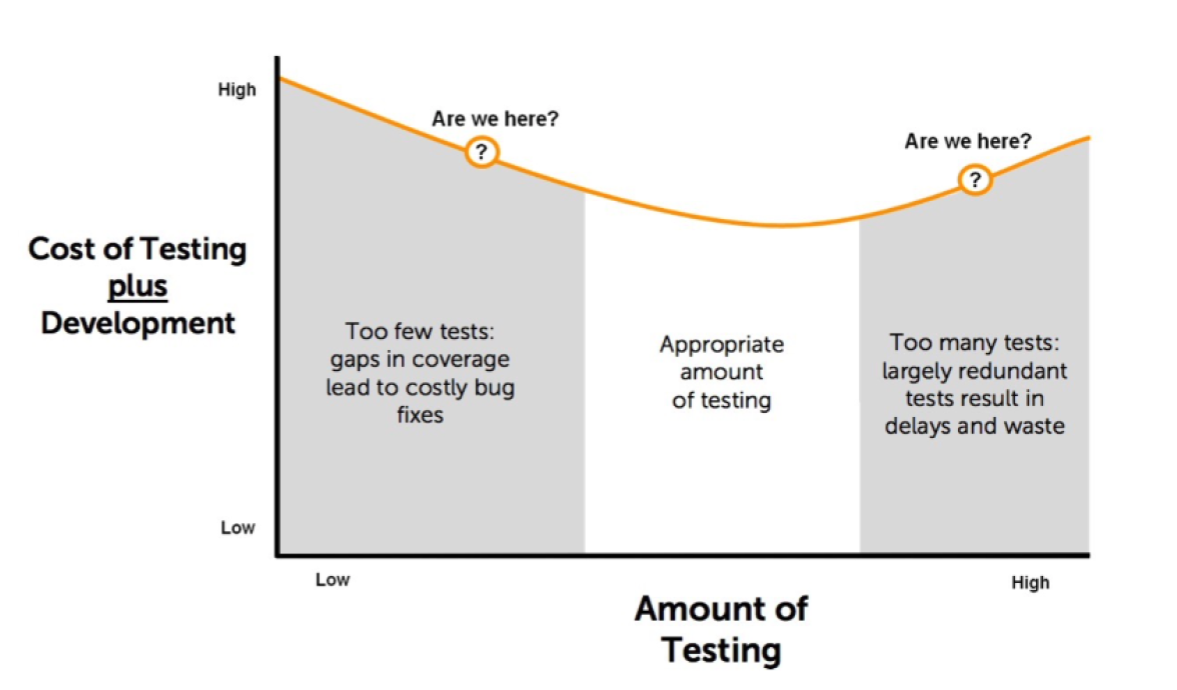

While the causes of these problems are numerous, we whittled them down into three main categories, discussing how a lack of test coverage measurement, inefficient test design, and ambiguous requirements can lead to devs over or underestimating the optimal number of tests to perform. All of these underlying causes must be addressed with different methods and strategies. There is no one-size-fits-all approach to balanced testing. Diagnosing and addressing these issues is worth it, however: remember the chart we discussed last week, which shows how eliminating under- and over-testing can save your team significant amounts of money.

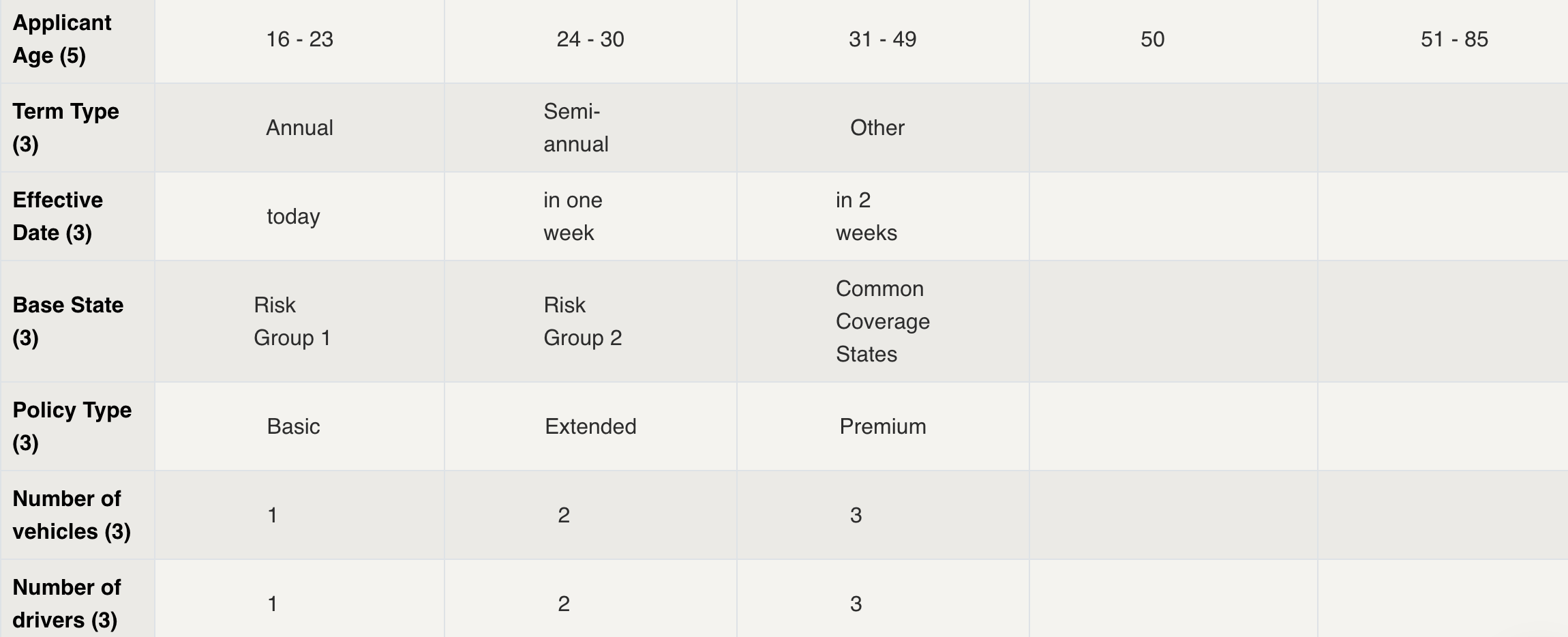

If a team’s testing issues come from inefficient test design, which can force them into excessive amounts of testing to achieve sufficient coverage, then the answer might be an overhaul of their test design process. One test design approach that has proven successful is an adoption of model-based testing. Developers and testers first create a model of the system made up of parameters, which are generally any elements of the system that the user can change or affect; they then assign each parameter all of the values it can possibly take. For example, a website offering a subscription service may appear different to users with no subscription, a normal subscription, or a pro subscription; a tester would likely choose to enter “Type of User” into the model as a parameter, with “non-user”, “normal user”, and “pro user” as the parameter values.

The advantage of model-based testing is that these models can easily be input into a wide variety of test design tools, such as Hexawise, in order to quickly generate testing sets that are mathematically optimized for efficiency. This optimization ensures that a team will be able to reach a sufficient level of coverage in a reasonable amount of tests, preventing over-testing.

A model created within Hexawise, showing the parameters a tester might define for an insurance application.

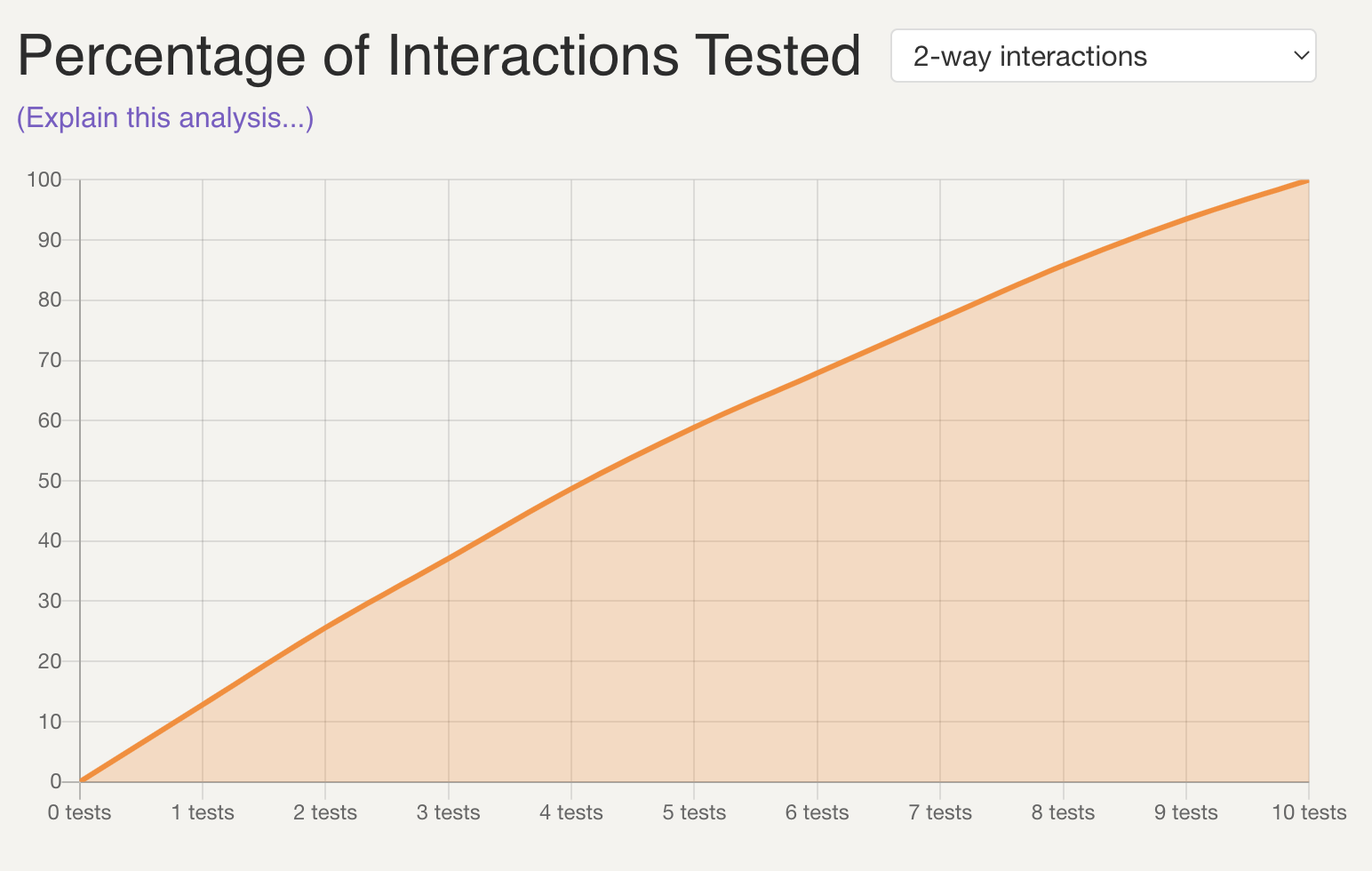

If a team decides to adopt this Hexawise-aided, model-based testing approach to test design, they will find that another issue that can cause under- and over-testing can now be easily addressed: test coverage measurement. Hexawise has a built-in testing coverage visualizer, which automatically generates graphs that relate the level of testing coverage to the number of tests performed. This allows a team to execute the exact number of tests necessary to reach a desired level of coverage, eliminating guesswork and preventing under- and over-testing.

An example of a coverage graph generated within Hexawise.

While the previous two issues can be solved simply by adopting new tools, the last issue, ambiguous requirements, requires a more holistic approach to address. Hexawise can certainly help: requirements can be entered into the tool, which will guarantee their appearance in any set of test cases that the tool generates.

However, ambiguous requirements are often a symptom of a communication issue, which can not be addressed by introducing new tools. A great way to bridge the gap is to have the testing team collaborate with the developers in order to construct the model used to generate test cases, ensuring that both teams have the same conception of the system under test.

As we mentioned before, eliminating under- and over-testing is not always easy. At Hexawise, it is our goal to make it as easy as possible, allowing your team to quickly and painlessly position themselves squarely between under- and over-testing, minimizing the cost, time, and effort associated with testing.