Testing Smarter with Dorothy Graham

This interview with Dorothy Graham is part of our series of “Testing Smarter with…” interviews. Our goal with these interviews is to highlight insights and experiences as told by many of the software testing field’s leading thinkers.

Dorothy Graham has been in software testing for over 40 years, and is co-author of 4 books: Software Inspection, Software Test Automation, Foundations of Software Testing and Experiences of Test Automation.

Dorothy Graham

Personal Background

Hexawise: Do your friends and relatives understand what you do? How do you explain it to them?

Dot: Some of them do! Sometimes I try to explain a bit, but others are just simply mystified that I get invited to speak all over the world!

Hexawise: If you could write a letter and send it back in time to yourself when you were first getting into software testing, what advice would you include in it?

Dot: I would tell myself to go for it. When I started in testing it was definitely not a popular or highly-regarded area, so I accidentally made the right career choice (or maybe Someone up there planned my career much better than I could have).

Hexawise: Which person or people have had the greatest influence on your understanding and practice of software testing?

Dot: Tom Gilb was (and is) a big influence, although in software engineering in general rather than testing. The early testing books (Glenford Myers, Bill Hetzel, Cem Kaner, Boris Beizer) were very influential in helping me to understand testing. I attended Bill Hetzel’s course when I was just beginning to specialize in testing, and he was a big influence on my view of testing back then. Many discussions about testing with friends in the Specialist Group In Software Testing (SIGIST), and colleagues at Grove, especially Mark Fewster.

Hexawise: Failures can often lead to interesting lessons learned. Do you have any noteworthy failure stories that you’d be willing to share?

Dot: I was able to give a talk about a failure story in Inspection, where a very successful Inspection initiative had been “killed off” by a management re-organisation that failed to realise what they had killed. There were a lot of lessons about communicating the value of Inspection (which also apply to testing and test automation). The most interesting presentation was when I gave it at the company where it had happened a number of years before; one (only one) person realized it was them.

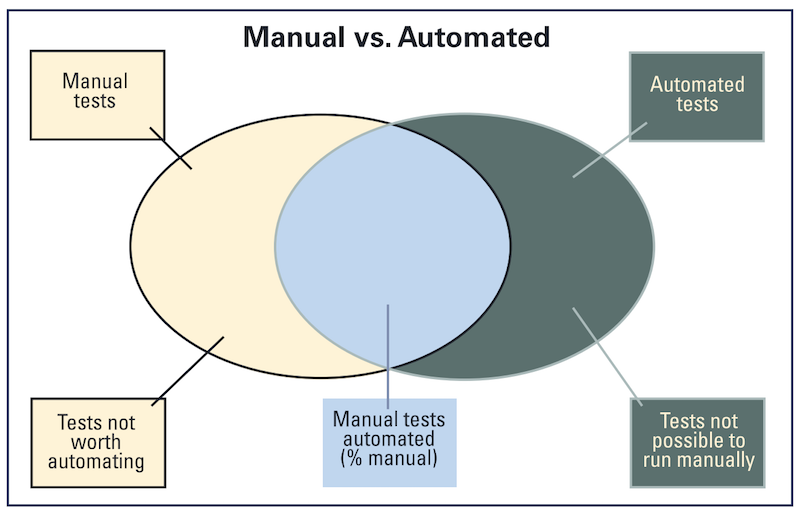

One fallacy is in thinking that the automation does only what is done by manual tests. There are often many things that are impossible or infeasible in manual testing that can easily be done automatically.

Hexawise: Describe a testing experience you are especially proud of. What discovery did you make while testing and how did you share this information so improvements could be made to the software?

Dot: One of the most interesting experiences I had was when I was doing a training course in Software Inspection for General Motors in Michigan, and in the afternoon, when people were working on their own documents, several people left the room quite abruptly. I later found out that they had gone to stop the assembly line! They were Inspecting either braking systems or cruise control systems.

When I was working at Ferranti on Police Command and Control Systems, I discovered that the operating system’s timing routines were not working correctly. This was critical for our system, as we had to produce reminders in 2 minutes and 5 minutes if certain actions had not been taken on critical incidents. I wrote a test program that clearly highlighted the problems in the OS and sent it to the OS developers, wondering what their reaction would be. In fact they were delighted and found it very useful (and they did fix the bugs). It did make me wonder why they hadn’t thought to test it themselves though.

Views on Software Testing

Hexawise: In That's No Reason to Automate, you state "Testing and automation are different and distinct activities." Could you elaborate on that distinction?

Dot: By “automation”, I am referring to tools that execute tests that they have been set up to run. Execution of tests is one part of testing but testing is far more than just running tests. First what it is that needs to be tested? Then how best can that be tested - what specific examples would make good test cases? Then how are these tests constructed to be run? Then they are run, and afterwards, what was the outcome from the test compared to what was expected, i.e. did the software being tested do what it should have done? This is why tools don’t replace testers - they don’t do testing, they just run stuff.

Diagram from That's No Reason to Automate

Hexawise: Test automation allows for great efficiency. But there are limits to what can be effectively automated. How do you suggest testers determine what to automate and what to devote tester's precious time toward investigating personally?

Dot: There are two fallacies when people say “automate all the manual tests” (which is unfortunately quite common). The first one is that all manual tests should be automated - this is not true. Some tests that are now manual would be good candidates for automation, but other tests should always be manual. For example, “do these colours look nice?” or “is this what users really do?” or even “Captcha” where it should only work if a person not a machine is filling in a form. Think about it - if your automated test can do the Captcha, then the Captcha is broken.

Automating the manual tests “as is" is not the best way to automate, since manual tests are optimized for human testers. Machines are different to people, and tests optimized for automation may be quite different. And finally, a test that takes an inordinate amount of time to automate, if the test isn’t needed often, is not worth automating.

The other fallacy is in thinking that the automation does only what is done by manual tests. There are often many things that are impossible or infeasible in manual testing that can easily be done automatically. For example if you are testing manually, you see what is on the screen, but you don’t know if all the GUI (graphical user interface) objects are in the correct state - this could be checked by a tool. There are also types of testing that can only be done automatically, such as Monkey testing or High Volume Automated Testing.

Hexawise: Can you describe a view or opinion about software testing that you have that many or most smart experienced software testers probably disagree with? What led you to this belief?

Dot: One view that I feel strongly about, and many testers seem to disagree with this, is that I don’t think that all testers should be able to write code, or, what seems to be the prevailing view, that the only good testers are testers who can code. I have no objection to people who do want to do both, and I think this is great, especially in Agile teams. But what I object to is the idea that this has to apply to all testers.

There are many testers who came into testing perhaps through the business side, or who got into testing because they didn’t want to be developers. They have great skills in testing, and don’t want to learn to code. Not only would they not enjoy it, they probably wouldn’t be very good at it either! As Hans Buwalda says, “you lose a good tester and gain a poor programmer.” The ultimate effect of this attitude is that testing skills are being devalued, and I don’t think that is right or good for our industry!

The best way to have fewer bugs in the software is not to put them in in the first place. I would like to see testers become bug prevention advisors! And I think this is what happens on good teams.

Hexawise: What do you wish more developers, business analysts, and project managers understood about software testing?

Dot: The limitations of testing. Testing is only sampling, and can find useful problems (bugs) but there aren’t enough resources or time in the universe to “test everything”.

The best way to have fewer bugs in the software is not to put them in in the first place. I would like to see testers become bug prevention advisors! And I think this is what happens on good teams.

Hexawise: I agree that becoming bug prevention advisors is a very powerful concept. Software development organizations should be seeking to improve the entire system (don't create bugs, then try to catch them and then fix them - stop creating them). I recently discussed these ideas in Software Code Reviews from a Deming Perspective.

Dot: I had a quick look at the blog and like it - especially the emphasis on it being a learning experience. It seems to be viewing “inspection” in the manufacturing sense as looking at what comes off the line at the end. In our book “Software Inspection” (which is actually a review process), it is very much an up-front process. In fact applying inspection/reviews to things like requirements, contracts, architecture design etc is often more valuable than reviewing code.

I also fully agree with selecting what to look at - in our book we recommend a sampling process to identify the types of serious bug, then try to remove all other of that type - that way you can actually remove more bugs than you found.

Hexawise: In what ways would you see "becoming bug prevention advisors" happening? Done in the wrong way it can make people defensive about others advising them how to do their jobs in order to avoid creating bugs. What advice do you have for how software testers can make this happen and do it well?

Dot: I see the essential mind-set of the tester as asking “what could go wrong? or “what if it isn’t” [as it is supposed to be]. If you have a Agile team that includes a tester, then those questions are asked perhaps as part of pair working - that way the potential problems are identified and thought about before or as the code is written. The best way is close collaboration with developers - developers who respect and value the tester’s perspective.

Industry Observations / Industry Trends:

Hexawise: Your latest book, Experiences of Test Automation, includes an essay on Exploratory Test Automation. How are better tools making the ideas in this essay more applicable now than they were previously?

Dot: Actually this is now called High-Volume Automated Testing, which is a much better name for it. HiVAT uses massive amounts of test inputs, with automated partial oracles. The tests are checking that the results are reasonable, not exact results, and any that are outside those bounds are reported to humans to investigate. Harry Robinson described using this technique to test Bing - quite a large thing to test!

Hexawise: When looking to automate tests one thing people sometimes overlook is that given the new process it may well be wise to add more tests than existed before. If all test cases were manually completed that list of cases would naturally have been limited by the cost of repeatedly manually checking so many test cases in regression testing. If you automate the tests it may well be wise to expand the breadth of variations in order to catch bugs caused by the interaction of various parameter values. What are your thoughts on this idea?

Dot: Nice analogy - I like the term “grapefruit juice bugs”. Using some of the combinatorial techniques is a good way to cover more combinations in a reasonably rigorous way. Automation can help to run more tests (provided that expected results are available for them) and may be a good way to implement the combination tests, using pair-wise and/or orthogonal arrays.

Hexawise: In your experience how big of a problem is automation decay (automated tests not being maintained properly)? How do recommend companies avoid this pitfall?

Dot: This seems to be the most common way that test automation efforts get abandoned (and tools become shelfware), particularly for system-level test automation. It can be avoided by having a well-structured testware architecture in the first place, where maintenance concerns are thought about beforehand, using good programming practices for the automation code. Although fixing this later is possible, preventing it in the first place is far better.

Hexawise: Large companies often discount the importance of software testing. What advice do you have for software testers to help their organizations understand the importance of software testing efforts in the organization?

Dot: Unfortunately often the best way to get an organisation to appreciate testing is to have a small disaster, which adequate testing could have prevented. I’m not exactly suggesting that people should do this, but testing, like insurance, is best appreciated by those who suffer the consequences of not having had it. Perhaps just try to make people aware of the risks they are taking (pretend headlines in the paper about failed systems?)

Staying Current / Learning

Hexawise: I see that you’ll be presenting at the StarEast conference in May. What could you share with us about what you’ll be talking about?

Dot: I have two tutorials on test automation. One is aimed mainly at managers who want to ensure that a new (or renewed) automation effort gets off “on the right foot”. There are a number of things that should be done right at the start to give a much better chance of lasting success in automation.

My other tutorial is about Test Automation Patterns (using the wiki). Here we will look at common problems on the technical side (rather than Management issues), and how people have solved these problems - ideas that you can apply. This also a code-free tutorial - we are talking about generic technical issues and patterns, whatever test execution tool you use.

Hexawise: What advice do you have for people attending software conferences so that they can get more out of the experience?

Dot: Think about what you want to find out about before you come, and look through the programme to decide which presentations will be most relevant and helpful for you. It’s very frustrating to realise at the end of the conference that you missed the one presentation that would have given you the best value, so do your homework first!

But also realise that the conference is more than just attending sessions. Do talk to other delegates - find out what problems you might share (especially if you are in the same session). You may want to skip a session now and then to have a more thorough look around the Expo, or to have a deeper conversation with someone you have met or one of the speakers. The friends you make at the conference can be your “support group” if you keep in touch afterwards.

I don’t think that all testers should be able to write code, or, what seems to be the prevailing view, that the only good testers are testers who can code. I have no objection to people who do want to do both, and I think this is great, especially in Agile teams. But what I object to is the idea that this has to apply to all testers.

Hexawise: How do you stay current on improvements in software testing practices; or how would you suggest testers stay current?

Dot: I am fortunate to be invited to quite a few conferences and events. I find them stimulating and I always learn new things. There are also many blogs, webinars and online resources to help us all try to keep up to some extent.

Hexawise: What software testing-related books would you recommend should be on a tester’s bookshelf?

Mine, of course! ;-) I do hope that testers have a bookshelf! For testers, I recommend Lee Copeland’s book “A Practitioner’s Guide to Software Test Design” as a great introduction to many techniques. For a deep understanding, get Kem Caner’s book “The Domain Testing Workbook”. I also like “Lessons Learned in Software Testing” by Kaner, Bach and Pettichord, and “Perfect Software and other illusions about testing” by the great Gerry Weinberg. But there are many other books about testing too!

Hexawise: What are you working on now?

Dot: I am working on a wiki called TestAutomationPatterns.org with Seretta Gamba. This is a free resource for system level automation, with lists of different issues or problems and resolving patterns to help address them. There are four categories: Process, Management, Design and Execution. There is a “Diagnostic” to help people identify their most pressing issue, which then leads them to the patterns to help. We are looking for people to contribute their own short experiences to the issues or patterns.

Dorothy Graham has been in software testing for over 40 years, and is co-author of 4 books: Software Inspection, Software Test Automation, Foundations of Software Testing and Experiences of Test Automation, and currently helps develop TestAutomationPatterns.org.

Dot has been on the boards of conferences and publications in software testing, including programme chair for EuroStar (twice). She was a founder member of the ISEB Software Testing Board and helped develop the first ISTQB Foundation Syllabus. She has attended Star conferences since the first one in 1992. She was awarded the European Excellence Award in Software Testing in 1999 and the first ISTQB Excellence Award in 2012.

Twitter: @DorothyGraham