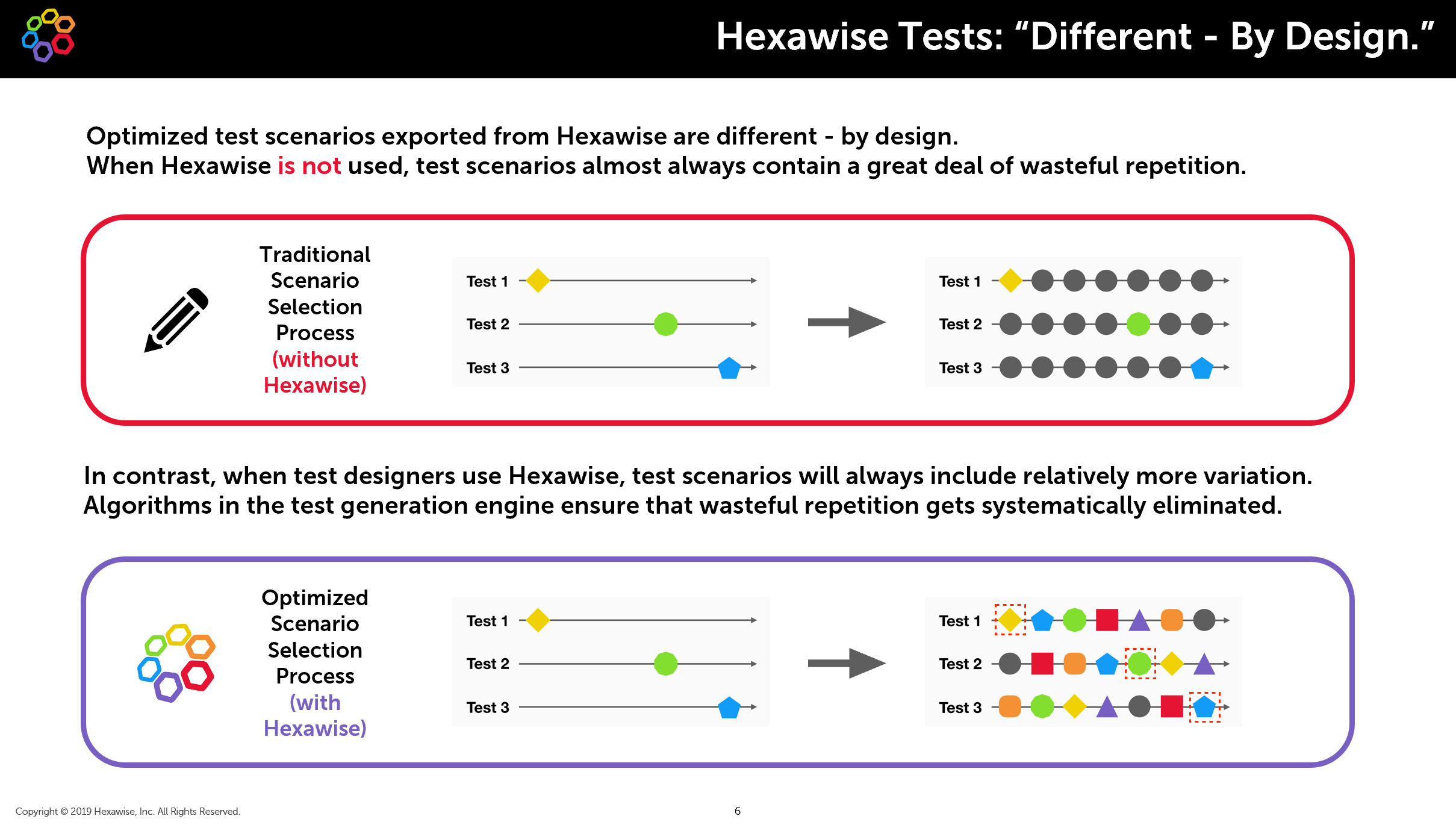

Hexawise Tests Are Robust and Thorough

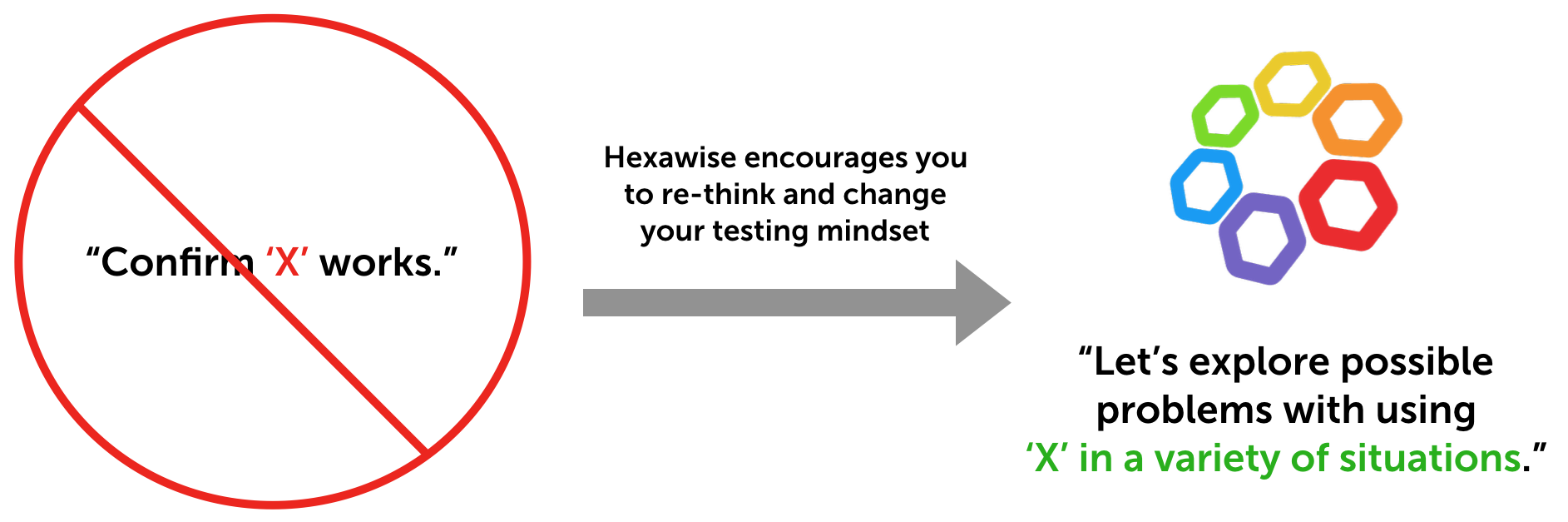

Hexawise generates different kinds of tests - by design. The objective behind a set of Hexawise tests is different than that of most manually-designed test sets.

Rather than trying to “Confirm that X works correctly,” Hexawise tests are focused...

read more By John Hunter Nov 20, 2019BDD FTW! Part 1: What is BDD?

Behavior-driven development (BDD) allows the so-called three amigos to collaborate together in the process of software development. The three amigos are development teams, who build the nitty-gritty details, QA teams, who are experts on how and...

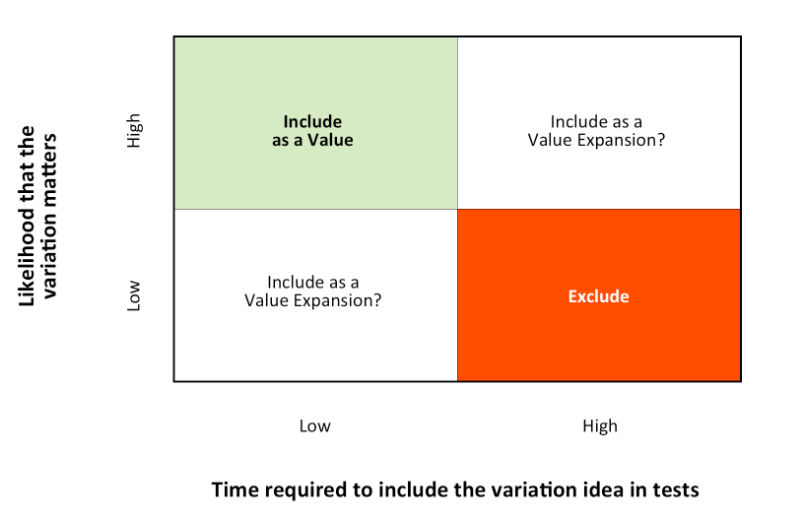

read more By Eric Musgrove Nov 6, 2019How to Identify Variation That Should be Tested

When testing software (or anything for that matter) it is important to determine what is important to test. Testing resources are limited (often, very limited) and we must be certain to focus our effort where it will do the most good. Hexawise...

read more By John Hunter Oct 29, 2019How a Book Club Impacted Our Software Development Practices

Hexawise started a book club in 2018. The book club evolved from a Slack channel for sharing interesting articles to help people improve their technical knowledge into a series of thought-provoking readings and discussions on a regular basis. The...

read more By Tyler Klose Oct 18, 2019Hexawise Test Design Professional Certification

The Hexawise Test Design Professional Certification is designed to educate software testers on modern software testing practices and on the use of Hexawise. Upon successful completion of the course students recieve a certification of Hexawise...

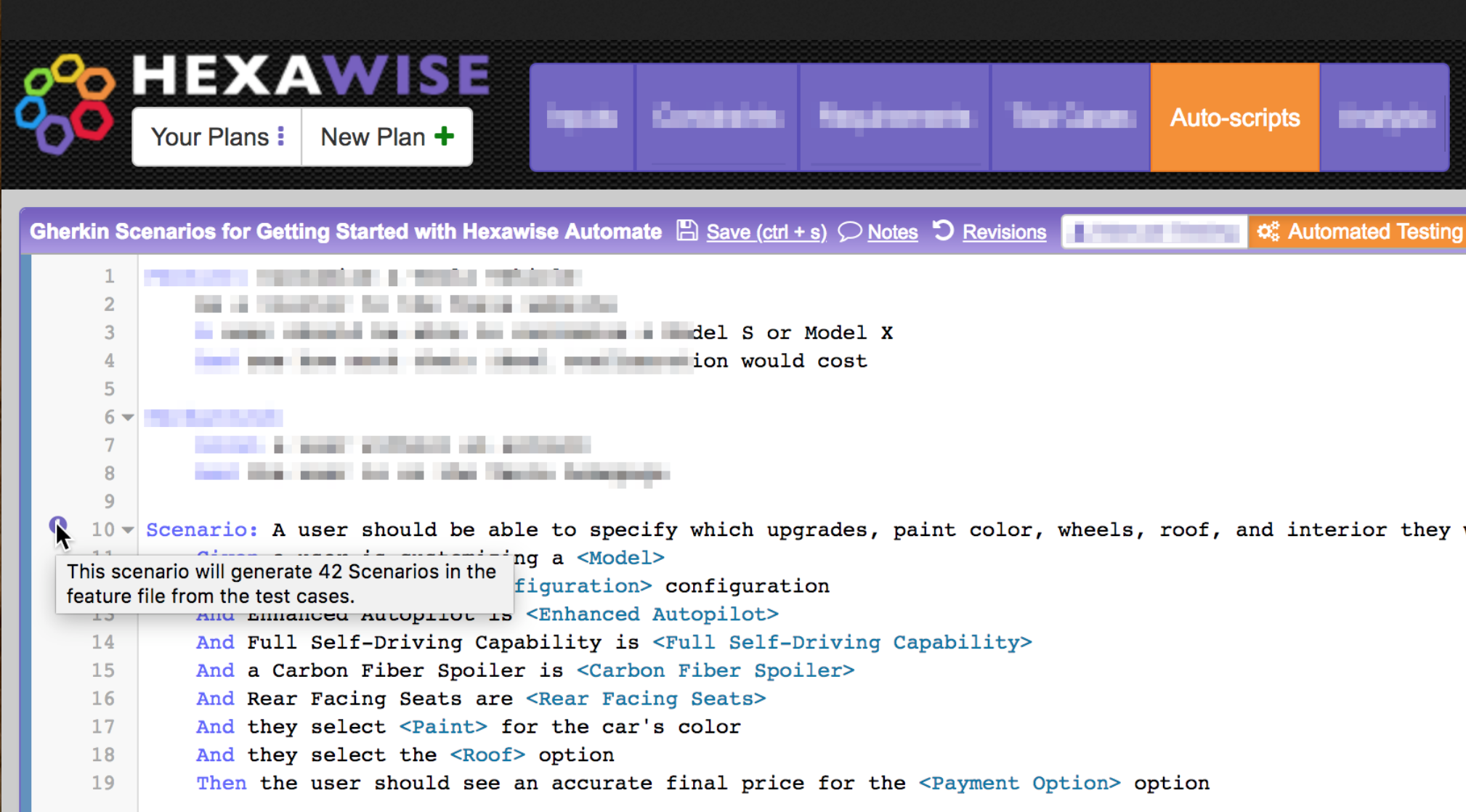

read more By John Hunter Sep 19, 2019Automating Gherkin Tests with Hexawise

Hexawise Automate is a tool that makes it easy to write behavior-driven development (BDD) automated acceptance tests in Gherkin format as data-driven scripts. These data-driven test scripts are based on a Hexawise test plan, so they are much more...

read more By John Hunter Sep 5, 2019Actionable Recommendations for Effective Software Testing

The journey to efficient software testing starts with a mindset & process shift – embracing a model-based combinatorial methodology. Traditional test design approaches often lead to the following problems:

- Direct duplicate tests due to...

read more By Matthew Dengler Aug 27, 2019Consulting at Hexawise: A Client’s User Group

As a Consultant at Hexawise, I work with users at our clients to ensure they are implementing and utilizing Hexawise to its highest potential. During the beginning stages of the Hexawise rollout process at companies, I work with stakeholders to...

read more By Conor Wolford Aug 14, 2019Software Testing Carnival #8: Artificial Intelligence

The Hexawise Software Testing carnival focuses on sharing interesting and useful blog posts related to software testing.

Getting Started with AI for Testing by Tariq King - "The field of AI is broad and so I recommend that you gain an...

read more By John Hunter Jul 29, 2019Soap Opera Testing Talk by Hans Buwalda

Hans Buwalda presented this talk on Soap Opera Testing at the Hexawise software testing talks during StarEast 2017.

Quote from Hans' article explaining Soap Opera Testing:

The end users were the people with the most practical knowledge, but...

read more By John Hunter Apr 19, 2018Page 3 of 15